Self-Execution Simulation Improves Coding Models

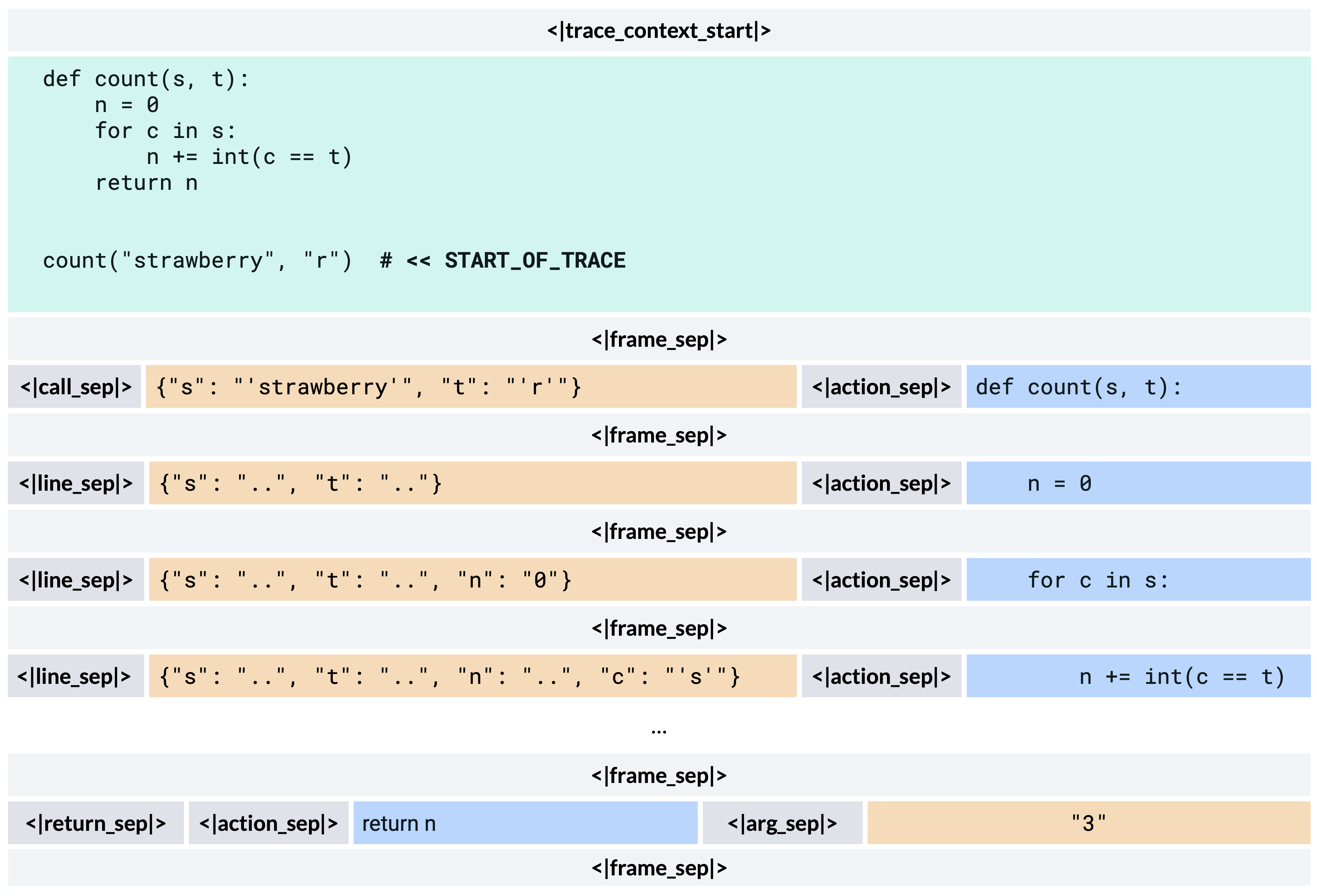

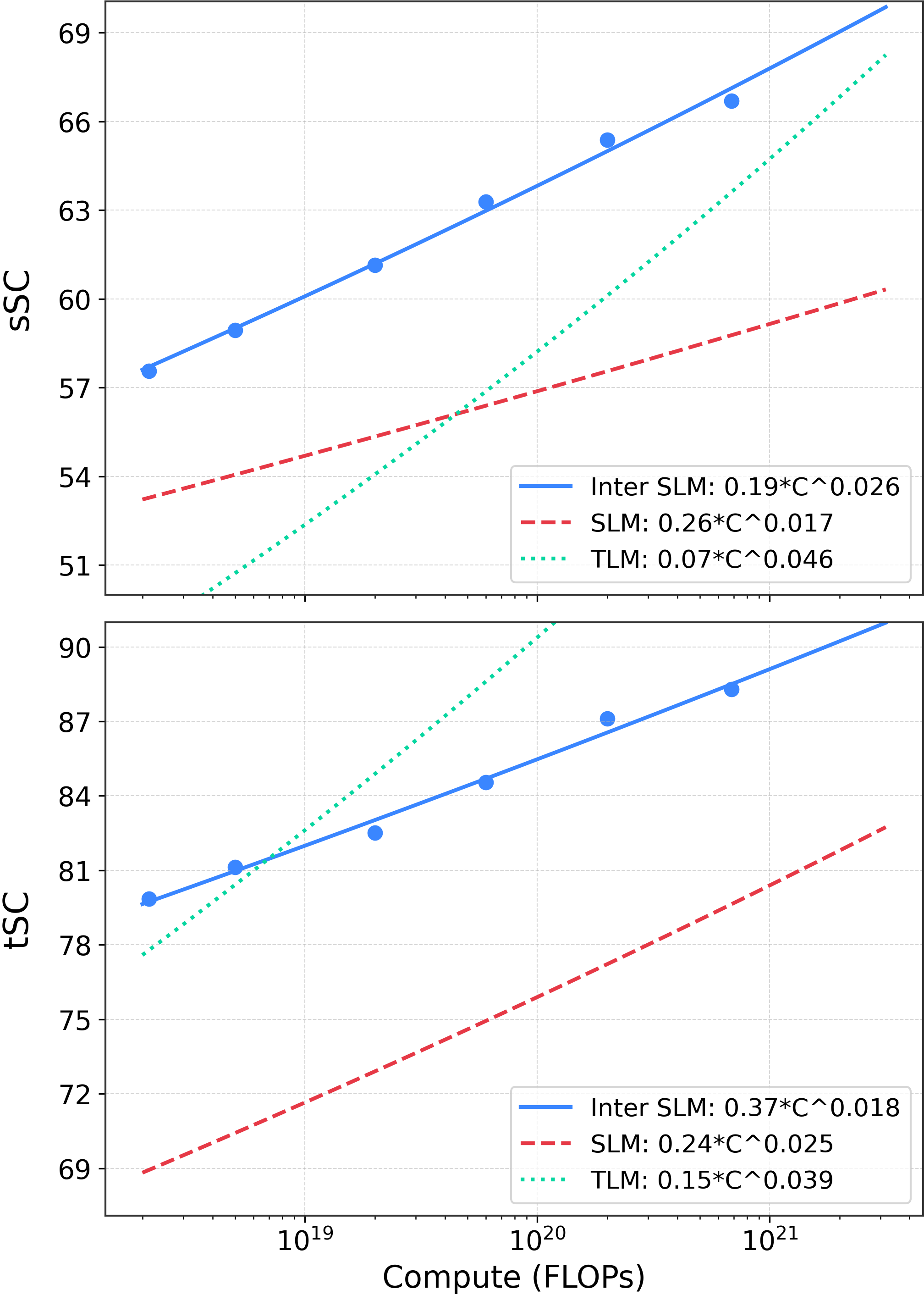

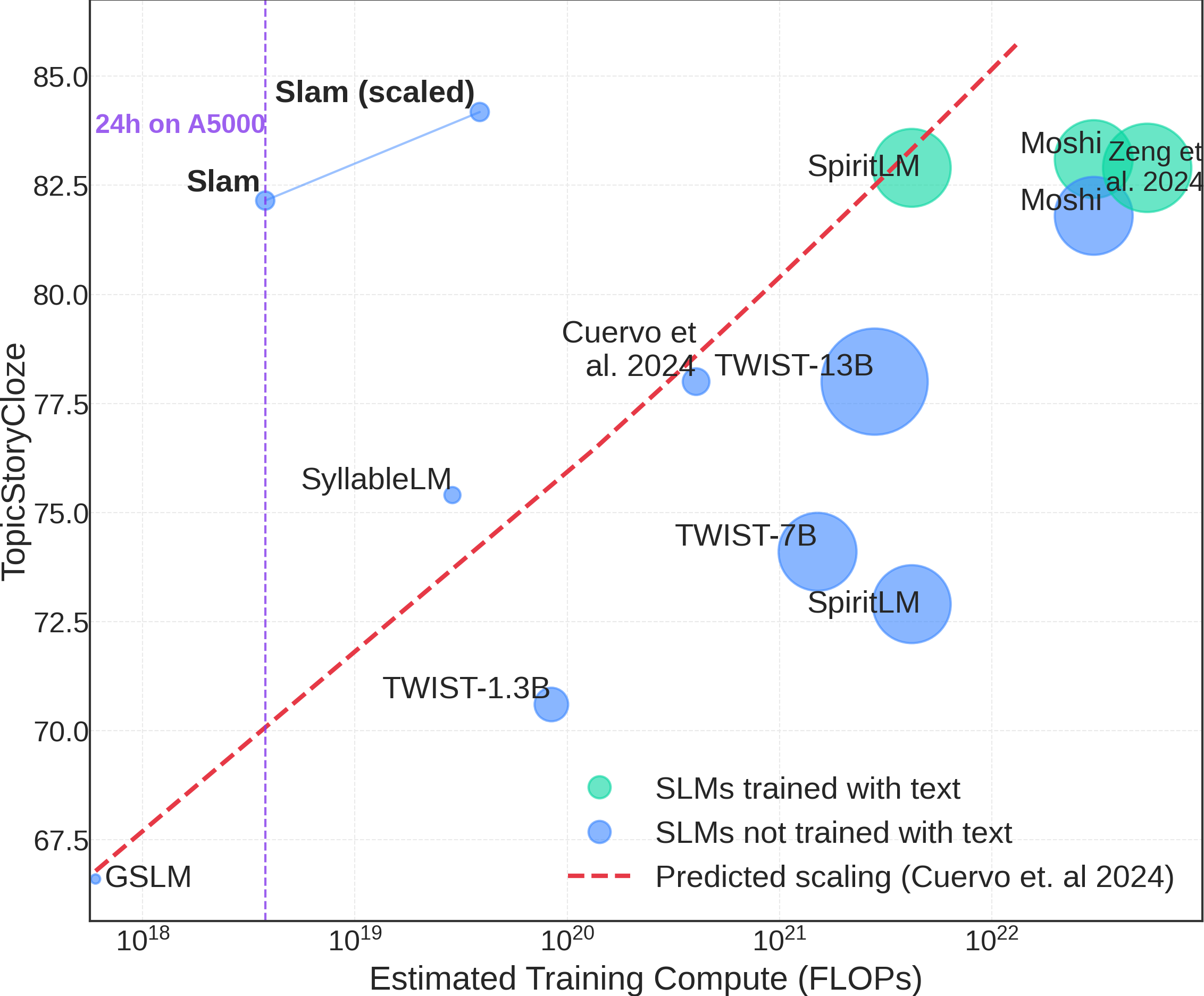

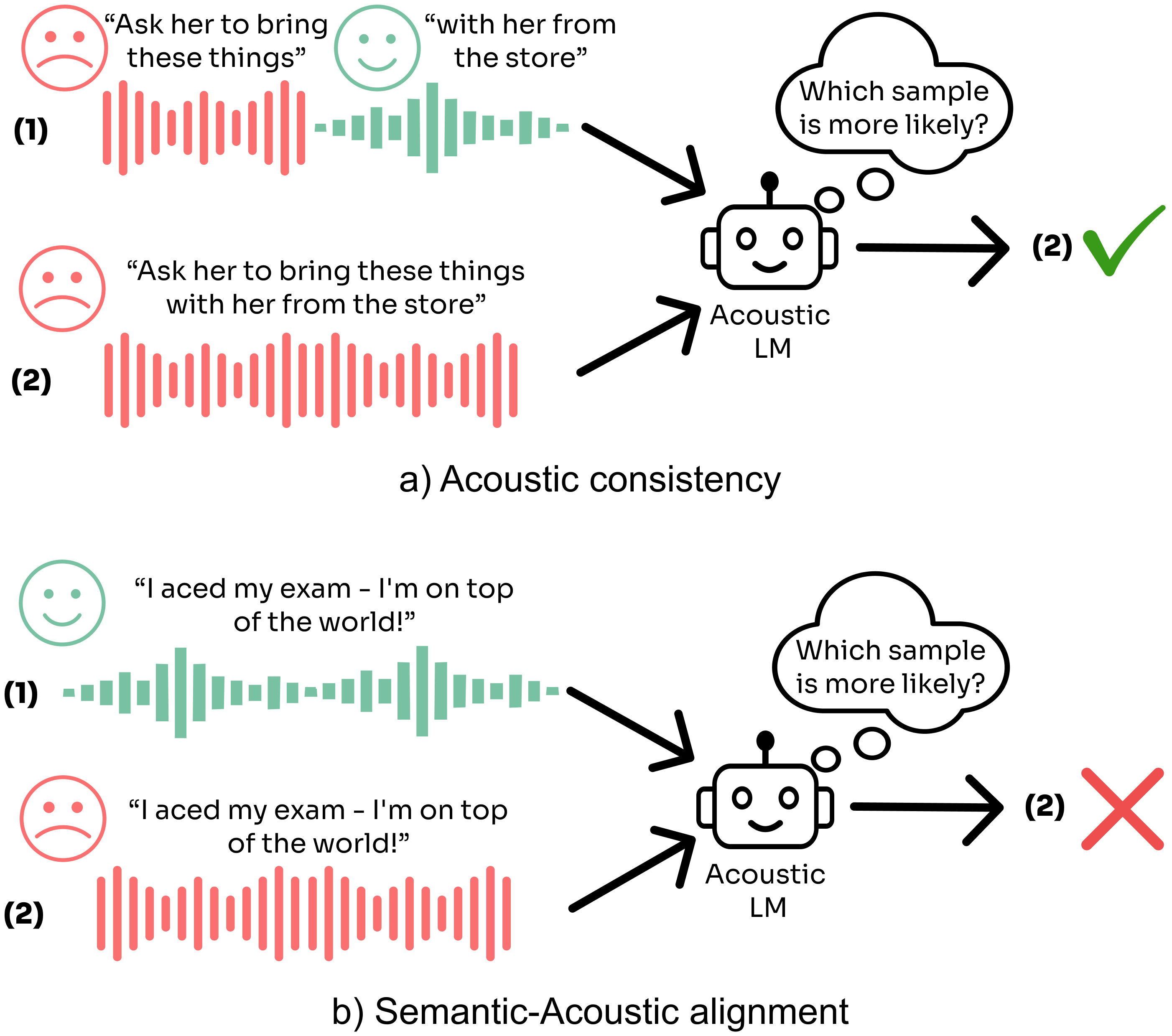

TL;DR - We post-train Code LLMs to reason about and simulate code execution given inputs. We then show that models can use this ability to self-verify and self-fix their own generated code. This gives an additional boost over standard reasoning models in competetive coding.