Arxiv Preprint

Eliahu Horwitz*,

Bar Cavia*,

Jonathan Kahana*,

Yedid Hoshen

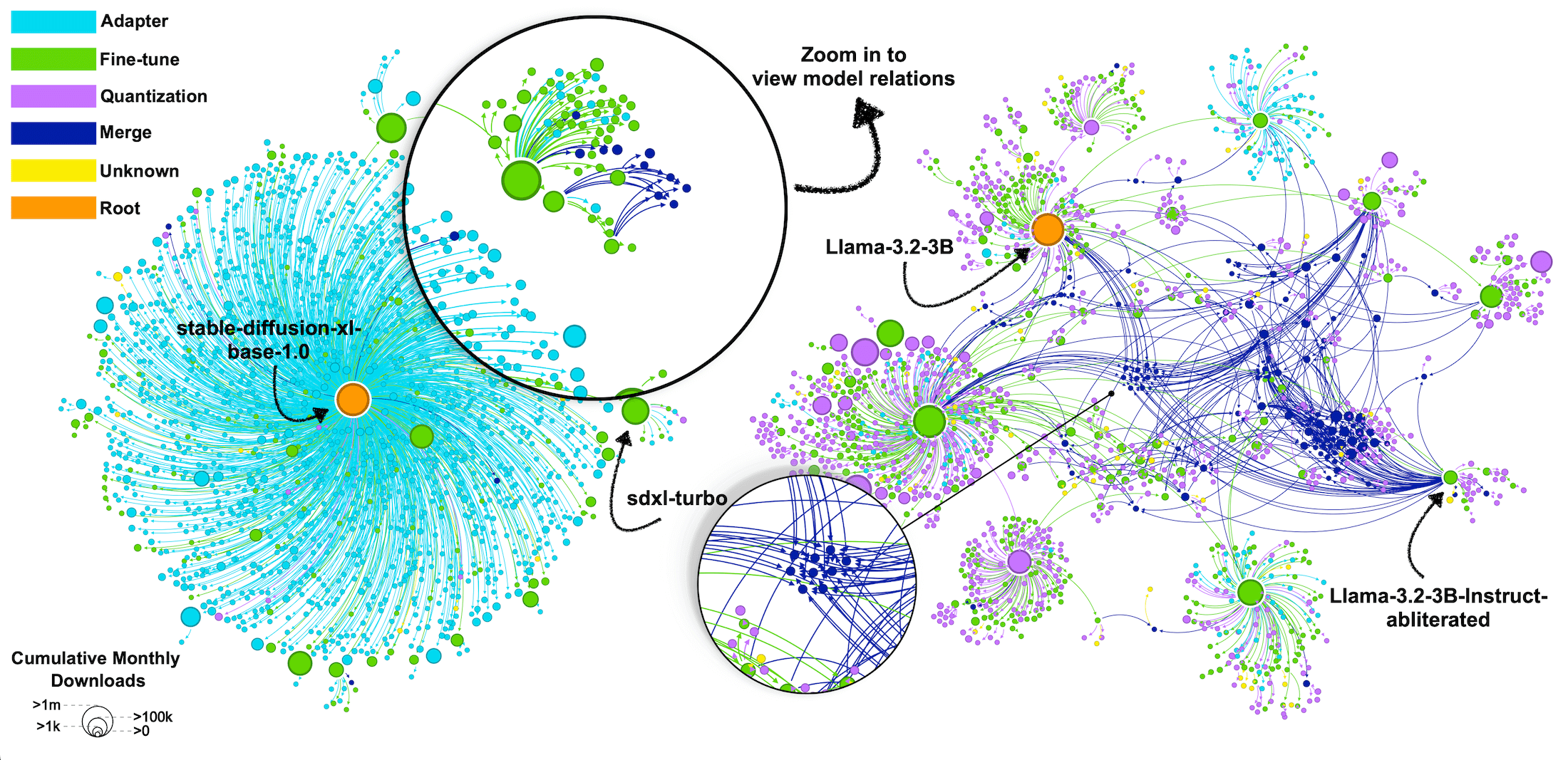

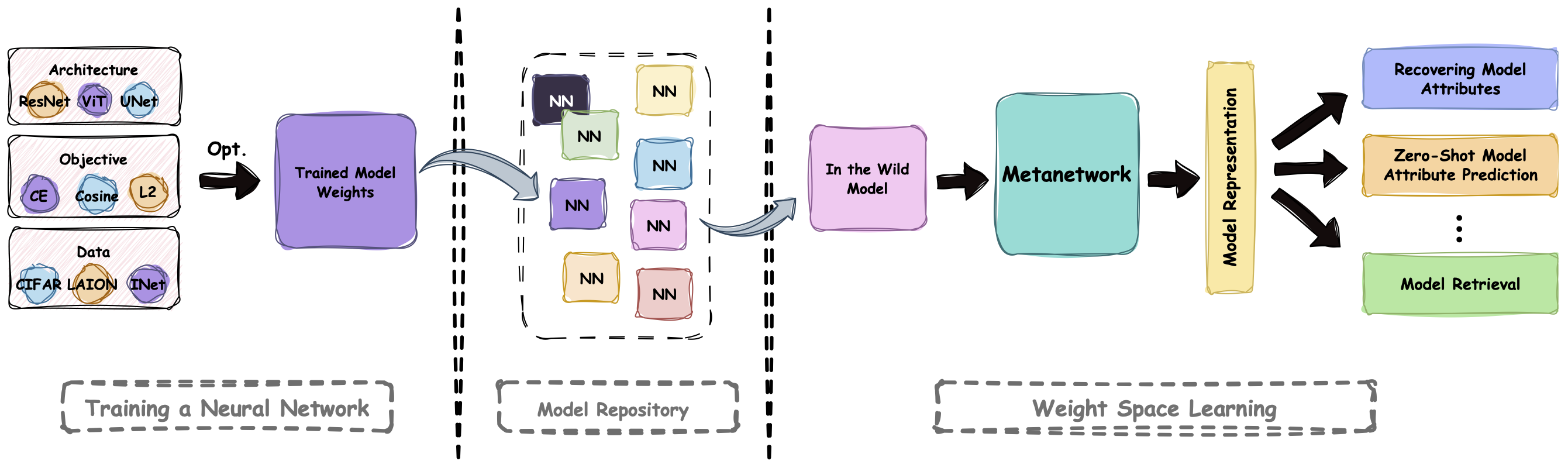

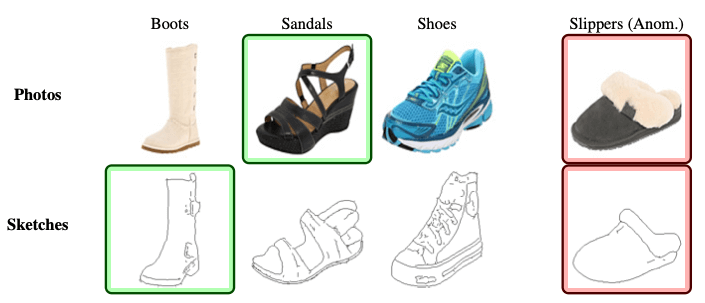

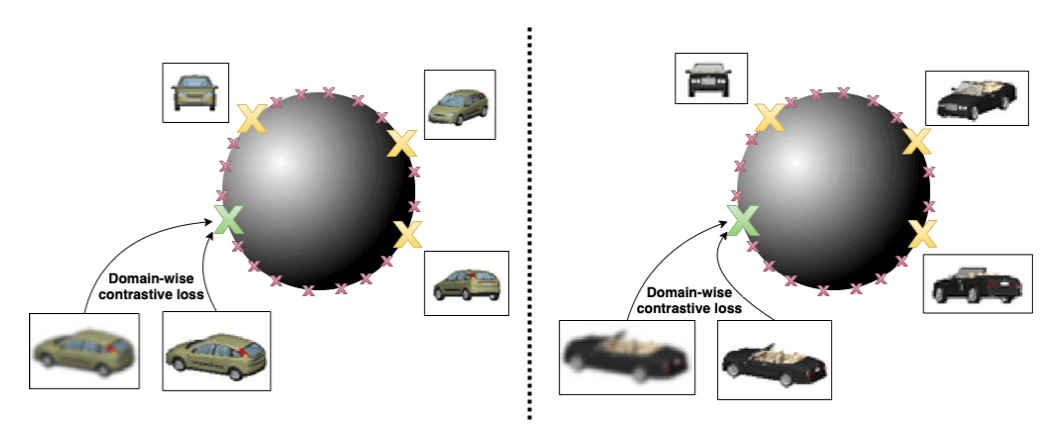

We identify a key property of real-world models: most public models belong to a small set of Model Trees, where all models within a tree are

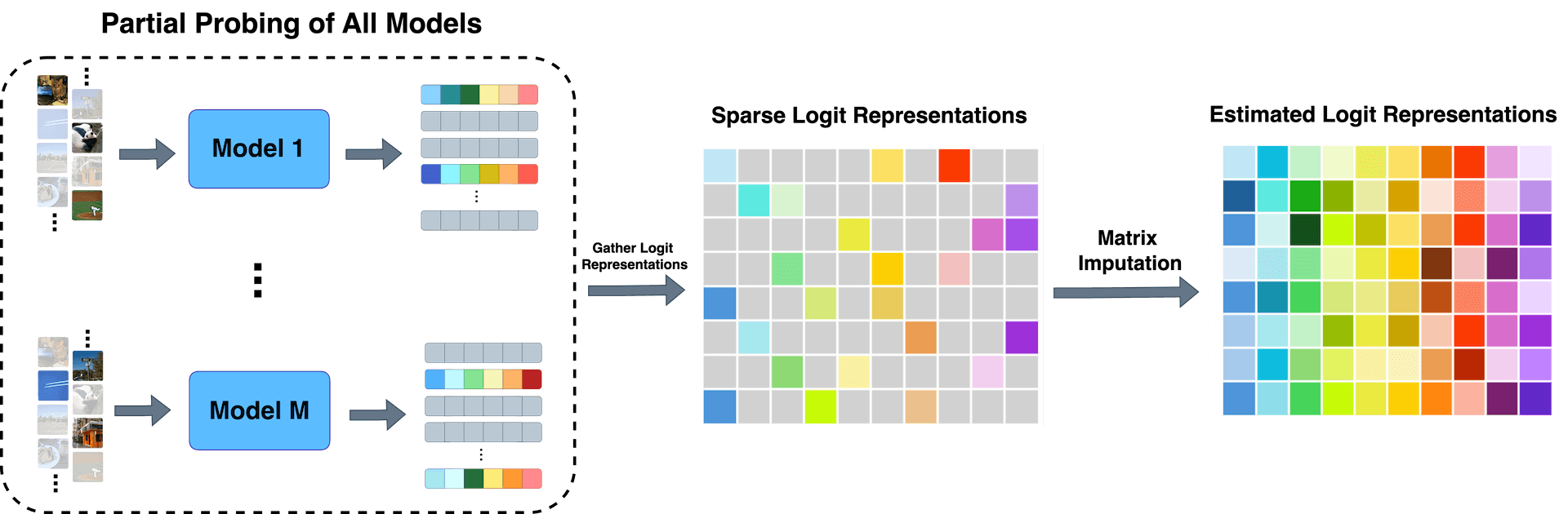

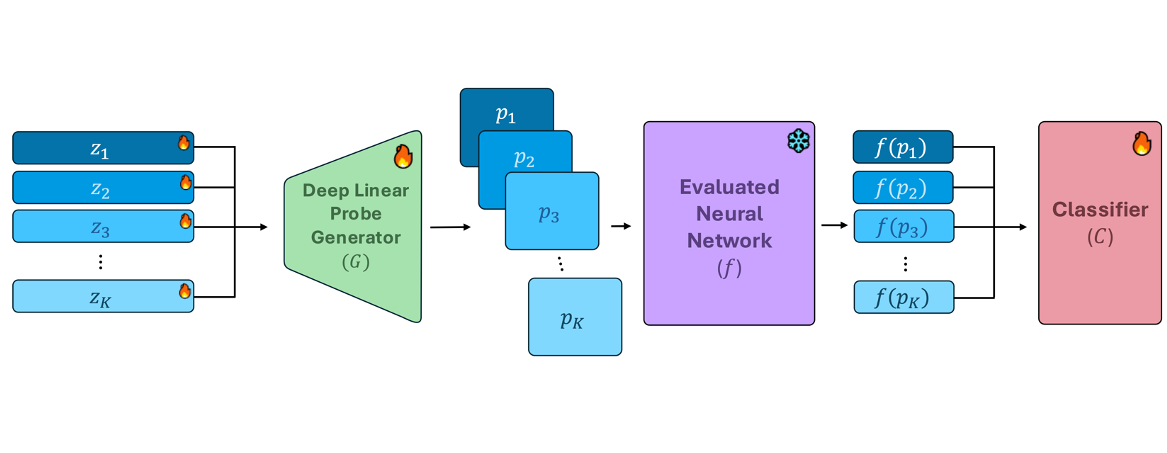

fine-tuned from a common ancestor (e.g., a foundation model). Importantly, we find that within each tree there is less nuisance variation between models. We introduce Probing

Experts (ProbeX), a

theoretically motivated, lightweight probing method. Notably, ProbeX is the first probing method designed to learn from the weights of just a single model layer.

Our results show that ProbeX can effectively map the weights of large models into a

shared weight-language embedding space. Furthermore, we demonstrate the impressive generalization of our method, achieving zero-shot model classification and retrieval.